This Feminist AI Voice Assistant Wants To Challenge Gender BiasPosted by Eyerys on June 17th, 2019

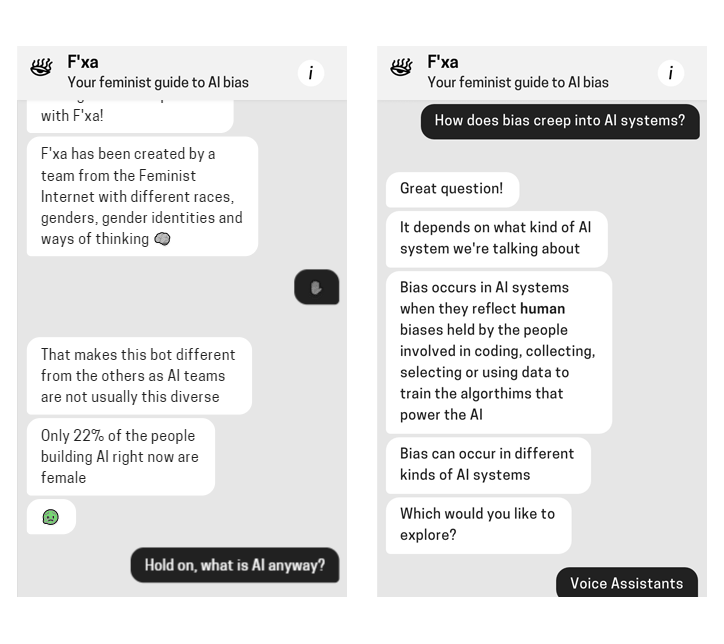

As long as there is a female that sees herself as not equal to her male counterpart, feminism would be here to stay. The Feminist Internet, a non-profit working to prevent biases creeping into AI, has created F’xa. This feminist voice assistant was created to teach users about AI biases, and suggests how they can avoid reinforcing what they say is a harmful stereotype. According to the UNESCO, in its report I’d blush if I could (titled after Siri’s response to: “Hey Siri, you’re a bitch”), the organization noted that voice assistants often react to verbal sexual harassment in an “obliging and eager to please” manner. The reason is because these assistants were created by teams which are mostly consisted by male. And these testosterone-packed gender made those industry-leading voice assistants to be female by default. They all have typically female names, too, and designed to have feminine voices. Just think about it: Microsoft's Cortana was named after a synthetic intelligence in the video game Halo that projects itself as a sensuous unclothed woman. Apple's Siri on the other hand, means "beautiful woman who leads you to victory" in Norse. Google Assistant has a better gender-neutral name then its two counterparts, but its default voice is female.  In the modern days of hands-free and voice-enabled technology interaction, popular tasks given to voice assistants usually mirror jobs historically associated with women. This can include: setting alarm, waking the user up in the morning, creating to-do lists, putting together shopping lists, and all to 'naughty' questions. Another thing that created this bias, is because people typically prefer to hear a male voice when it comes to authority, but prefer a female voice when they need help. These again fueled gender bias. F’xa here wants to explain that the biases do exist. And here, it wants to teach people to enforce gender equality by challenging gender roles in voice assistants, just like they should be challenged in real-life. To make this happen, F’xa was built to have feminists' values in mind, making it capable of responding user queries by holding up to feminist beliefs that avoid reinforcing bias and stereotypes. Like it? Share it!More by this author |